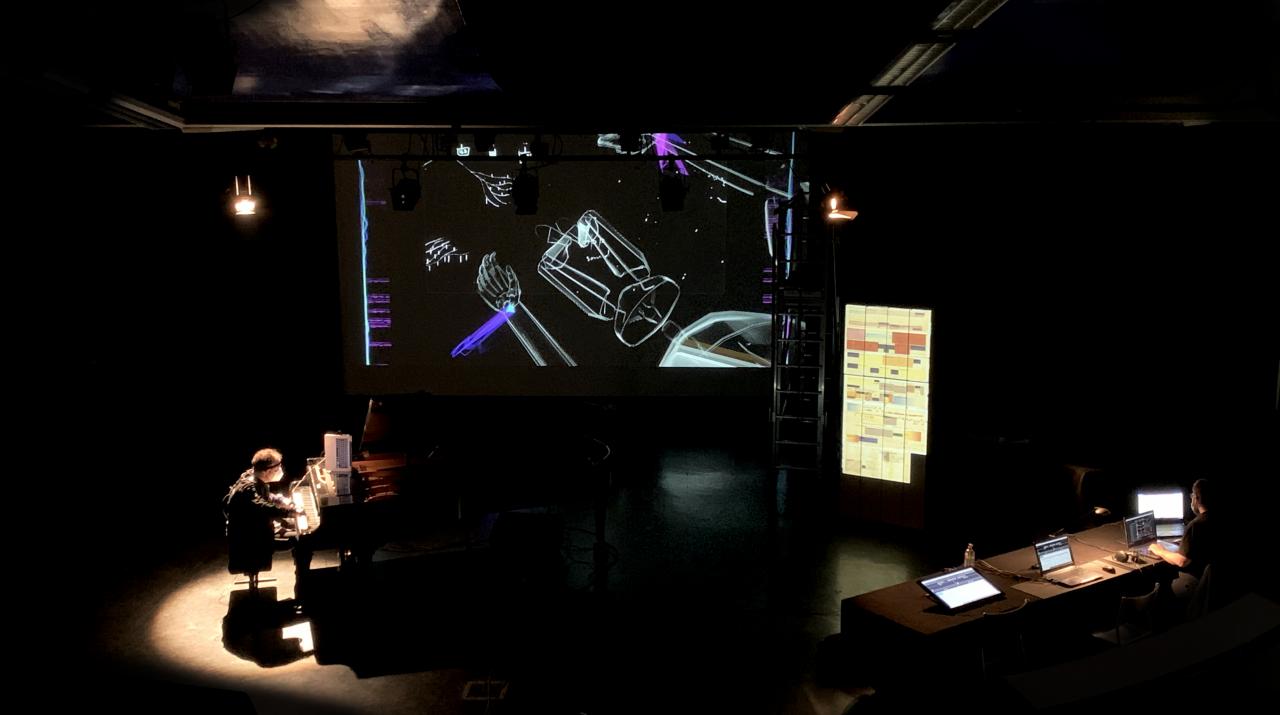

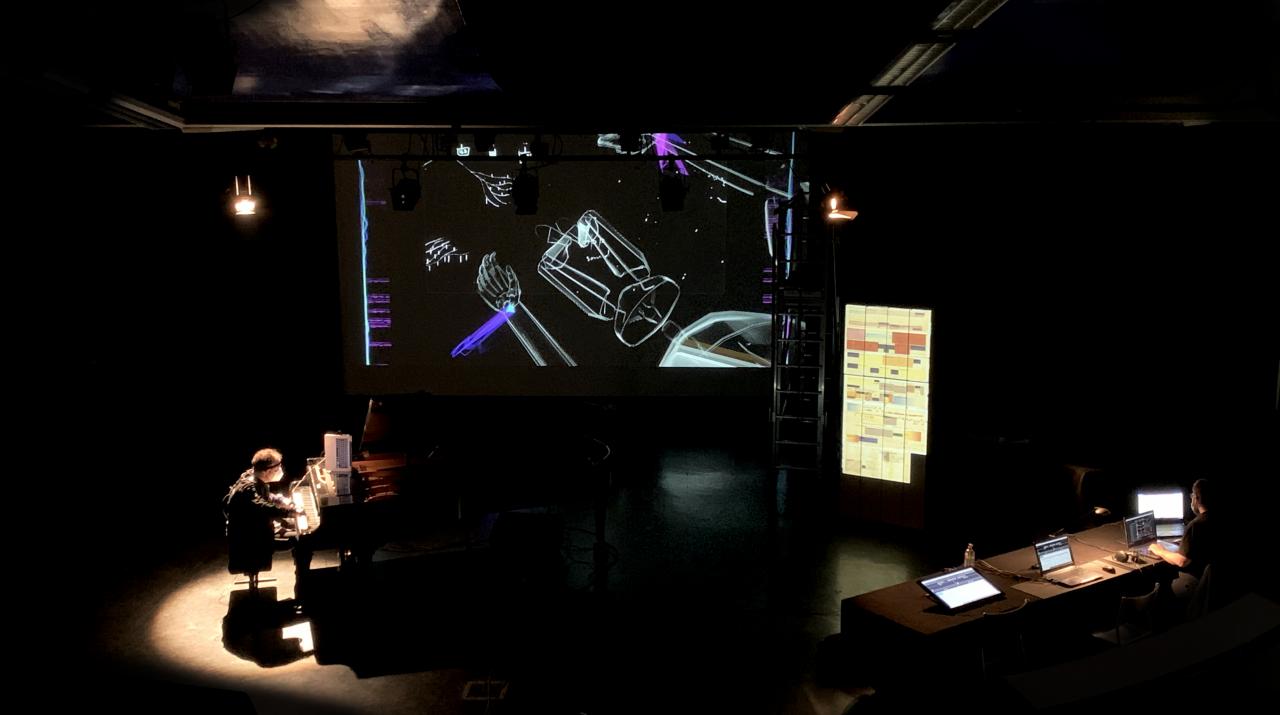

Pavlos Antoniadis, GesTCom system development

PROJECT DURATION

2020 - 2021

PROJECT OWNER(S)

Pavlos Antoniadis

IN COLLABORATION WITH

EA 4010 Visual Arts and Contemporary Arts (AIAC), EA 1572 Aesthetics, Musicology, Dance and Musical Creation (musidanse)The objective of my research project at EUR-ArTeC is to develop the GesTCom system in the direction of a multi-modal tracking tool of piano scores in real time. The scientific and artistic domains involved are music performance, physical motion capture and analysis, human-computer interaction, systematic musicology, embodied cognition theories and composition. The proposed tool could enhance the live performance experience by visualizing and broadcasting performance data to the audience in real time during the concert. Simultaneously, the GesTCom + system could change the collaboration protocol between the composer and the performer, transforming the score into a common, interactive and adaptive platform for the creative process and even for the learning process, based on machine learning. Thus, the goal of the project concerns all three domains of the musical chain: reception, interpretation and composition.

This research is based on my hypothesis of the embodied navigation of complex notation: Instead of a paradigm of internalization of the musical text, I suggest treating musical notation interactively, as a dynamic element. As a theoretical support structure, I suggest a concept based on the theories of ecological psychology and embodied cognition. The basic principle posits that musical cognition is not reducible to mental processes alone, but is distributed across the brain, the body and the environment. Musical notation is theorized as a space of physical states and potentialities, called “affordances” in ecological psychology. The pianist manipulates and processes musical notation through physical movement, as if the musician were part of the instrument or an extension of it. Continuing this metaphor, we can consider that the pianist touches the notation as one touches the instrument, and that this action is part of cognition.

The system called GesTCom (Gesture Cutting through Textual Complexity) integrates tools for gestural interaction with the score and for the coding of augmented and interactive notations. The methodology of the GesTCom system is based on four steps:

recording and reproducing multimodal data; qualitative analysis of the data; offline notation processing according to the multimodal data; and variable performance monitoring using real-time multimodal and interactive tablature.

Biography

Pavlos Antoniadis (PhD in music & musicology, University of Strasbourg in co-direction with IRCAM, Dissertation Award 2019; MA in piano performance, University of California, San Diego; MA in musicology, University of Athens) is a pianist, musicologist and technologist. As an embodied cognition researcher in systematic musicology, and a developer of systems for computer-assisted learning and music performance, he specializes in the contemporary piano repertoire. As a pianist, Pavlos has performed in Europe, Asia and America with the ensembles Work in Progress-Berlin, Kammerensemble Neue Musik Berlin, Phorminx, ERGON, Accroche Note and the National Orchestra of Thessaloniki. He has recorded for Mode (2015 German Record Critics’ Prize) and Wergo. As a researcher, Pavlos has published in English, German and French; he developed the GesTCom system with Frédéric Bevilacqua (IRCAM Music Research Residency 2014) and has been invited by several institutions worldwide for lecture-performances and workshops. He is currently a post-doc researcher at EUR-ArTeC (Université Paris 8, MUSIDANSE & AIAC) and collaborator of the Interaction-Son-Musique-Mouvement / Interaction-Sound-Music-Movement team at IRCAM; he will continue his research at TU-Berlin (Audiokommunikation) as a Humboldt Stiftung fellow (2021 & 2022).

PROJECT TEAM

Research tutors: Makis Solomos (Paris 8 university), Jean-François Jégo (Paris 8 university)